O7A View Sync

Host Support

| Host Type | Support |

|---|---|

| AAX | Yes |

| VST2 | Yes |

Audio

| Channels | Content | |

|---|---|---|

| Input | 64 | O7A |

| Output | 64 | O7A |

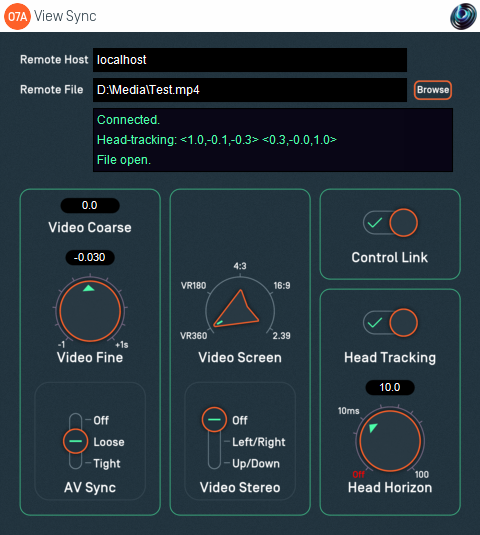

Controls

- Remote Host

- Remote File

- Video Coarse

- Video Fine

- A/V Sync

- Video Screen

- Video Stereo

- Control Link

- Head Tracking

- Head Horizon

Description

This plugin manages a link between a DAW session and a separate View application running on an ordinary display or Virtual Reality (VR) headset, locally or remotely. This link can be used to:

- Connect to a View application.

- Start video playback in the View application and synchronize DAW audio and View video.

- Receive head tracking orientation back from the View application and compensate for head orientation by rotating the O7A audio stream.

- Link some controls from some plugins to the View application so they can be controlled there.

The only audio processing that this plugin performs is rotation of the audio stream when head tracking is in use. Otherwise, it passes the audio through unmodified.

Connecting to a View Application

The plugin connects to a View application running on a local or remote host. It does not matter whether the plugin or application is started first as the plugin will make repeated attempts to connect, but for a successful connection both the plugin and View must be using the same port number (27103 by default). The connection uses TCP and networking needs to be possible on this port (in particular, firewalls may need configuration).

The remote host is configured by entering text into the Remote Host text box. The status of the connection is described in the main status box in the middle of the user interface.

Video Files

Entering a filename into the Remote File text box will cause the View application to look for a video file on its file system. If the connection is remote, please note that the View application will be looking at the file system on the remote machine.

If the connection is local (so the Remote Host text box says "localhost") a "Browse" button is available.

The View application reads the video directly; it is not streamed from the DAW plugin. Consequently, the View application needs permission to access the file, which may need changes to be made in macOS Settings. Also, if the View application is running on a remote machine, the filename needs to be correct for that machine, not the one running the plugin. The status of the video will be shown in the main status box in the middle of the user interface.

The video format present in the file should be configured using the Video Screen and Video Stereo options.

Video Synchronization

Once open, the video can be synchronized with the audio in the DAW. This allows audio to be edited "to picture" for both classic film and 360 video for Virtual Reality.

Basic time synchronization is made between the time within the DAW's project timeline and the video time. For instance, if the DAW project is ten seconds in from the start of the project, video from ten seconds in will be shown.

However, you may not want to place the audio for the video at the start of the DAW project. To allow this, the Video Coarse control allows the video and audio to be offset by a large interval.

There may also be synchronization issues between audio and video, for instance because of a latent audio stack. To compensate for this, the Video Fine control can be used to make small shifts to the offset. In fact, the coarse and fine controls are just added together before they are used; two controls are provided for convenience.

A/V sync can get out of line, particularly on a busy computer system. The A/V Sync control helps control how this is handled. If you find your A/V Sync is inconsistent or playback sometimes "judders" then you may want to try changing this setting.

Head Tracking

The View applications can send orientation data back to the plugin. The VR version of the View application (ViewVR) can use the head tracker built into the VR headset. In the View application, in Frontal View mode, 2DOF orientation data matching the camera can be controlled using the mouse.

When head tracking is turned on, the 3D audio stream being passed through the plugin is rotated in a way that compensates for the head orientation. For instance, if the head is turned to the left, the audio scene is rotated to the right so sounds that were to the left are now in front.

Head tracking data can take a while to arrive and its impact will not be heard until after the audio has passed through the system audio stack. Consequently, it can be latent. To attempt to compensate for this, a Head Horizon forward predictor is available. This attempts to guess where the head will be, based on the data available. However, best results are typically achieved when latency is very low (a few milliseconds) so it may be worth configuring your DAW with this in mind.

The current 'forward' and 'up' head direction vectors are shown in the display, in ambisonic coordinates (so X is forwards, Y is to the left and Z is up).

Control Link

If the Control Link switch is enabled on this sync plugin (and on no other within the DAW) then this plugin will be used to manage communication between the View application and other O7A plugins in the same process.

More Than One Sync Plugin

More than one sync plugin may be used in a project.

Using head tracking on more than one plugin at a time can make sense in some contexts. For instance, you can send from a O7A master track to multiple binaural decoder tracks, each with independent head tracking for different VR headsets.

However, only one O7A sync plugin may have its Control Link switch enabled at once. If more than one is enabled, control links will not work.

Connections Between Plugins

When Control Link is used to communicate between the O7A View Sync plugin and other plugins, the plugins involved must be in the same Windows or macOS process. Some DAWs allow plugins to be hosted in separate processes. If you use this facility, take care to ensure the same hosting is used for the plugins you want to link.

O3A and O7A control links work independently of each other. If you have O3A plugins in your project, they will not connect to the O7A View Sync plugin, and vice versa. O3A View Sync and O7A View Sync can be used simultaneously, although they will need to connect to different View instances.

Is The Plugin Ignoring Me?

The plugin needs to be running for things to happen. If it is switched off or bypassed, it will not work properly.

Also, to save CPU usage, some DAWs switch off plugins dynamically when they do not see them doing anything, and they will not see the network communication this plugin uses. You can discourage such DAWs from switching this plugin off by making sure you have some audio running through it.

The plugin is available in the O7A View plugin library.

Controls

Control: Remote Host

The Remote Host text tells the plugin where to find a View application to connect to. This can be written as:

- The text "

localhost", meaning the machine the plugin is running on. - A network hostname. If you are on a local network, this may be a simple machine label (e.g. "

server7"). Otherwise, it may be a machine hostname similar to those seen within web URLs (e.g. "nosuch.blueripplesound.com"). - An IP address, which typically takes the form of four numbers separated by periods such as "

192.168.1.4". - Any of the above, but followed by a colon and number. This is used when you want to specify which port to connect to on the remote machine. For instance, "

server7:27104" means that the plugin should connect to machineserver7, but use port 27104 rather than the usual port (27103).

If the port is not specified, the default (27103) is used. To connect, this must match the setting shown on screen in the View application connected to.

Control: Remote File

If a filename is entered into the Remote Field field then the a connected View application will attempt to find a video file at this location.

Please note that the video file needs to be accessible to the View application rather than the plugin, which is unlikely to be running in the same directory and need not even be on the same computer.

On macOS, you may need to change Settings to allow View to access video files, depending on their location.

Controls: Video Coarse and Video Fine

The coarse and fine video controls are added together and then used as an offset to the clock used to control the video stream. Negative values delay the video and positive values advance it.

Two controls are provided for ease of use; the fine control is normally reserved for fine A/V sync tuning.

Control: A/V Sync

This control determines how the View application will react when it realises that audio and video is not fully in sync. This setting is offered because correcting synchronization issues too often can cause video to skip and make things worse rather than better. The options are:

| Setting | Description |

|---|---|

| Off | Synchronization is only used in the event of jumps in the audio file. No local synchronization is applied. |

| Loose | Some synchronization is applied and the video should rarely drift visibly out of line. |

| Tight | Tight synchronization is applied. This may cause issues for some video modes. |

Controls: Video Screen and Video Stereo

These controls must be set to correspond to the intended presentation of the video frames in the file. When stereographic video is in use, the frames are subdivided into halves for each eye. Then, the image may be interpreted in different ways, for instance for a 16:9 rectangular screen, or as a 360 video to be shown as a VR experience.

These settings should be set accurately regardless of the perspective used in the View application.

The screen options are:

| Setting | Description |

|---|---|

| VR360 | The video is assumed to contain a full spherical VR video, mapped onto the rectangular video frame. The frame is assumed to map elevation to pixels linearly. The top of the frame is assumed to correspond to the top of the sphere and the middle of the frame to the front of the sphere. |

| VR180 | The video is assumed to contain a frontal hemispherical VR video, mapped onto the rectangular video frame. The frame is assumed to map elevation to pixels linearly. The top of the frame is assumed to correspond to the top of the sphere and the middle of the frame to the front of the sphere. |

| 4:3 | The video is assumed to be intended for use on a 4:3 rectangular video screen. |

| 16:9 | The video is assumed to be intended for use on a 16:9 rectangular video screen. |

| 2.39 | The video is assumed to be intended for use on a 2.39:1 rectangular video screen. |

The stereo (stereographic) options are:

| Setting | Description |

|---|---|

| Off | The video is not stereographic. |

| Left-Right | Each video frame is divided horizontally into left and right images corresponding to the left and right eyes. |

| Up-Down | Each video frame is divided vertically into upper and lower images corresponding to the left and right eyes. |

Depending on the type and mode of the View application the video can be presented in different ways.

At the time of writing, only the ViewVR application will render stereographic images as such.

Control: Control Link

If this switch is enabled on this sync plugin and no other in the DAW then plugin will be used to manage communication between the View application and other plugins.

Control: Head Tracking

This switch enables or disables use of head tracking data, if it is available.

Control: Head Horizon

This dial determines how far into the future the head tracking module will attempt to predict head orientation. Longer predictions are less stable. This module can be turned off. It can be used to help compensate for slow head tracking or latent audio.